5 min readNew DelhiFeb 24, 2026 08:42 AM IST

AI startup Anthropic has accused Chinese AI companies DeepSeek, Moonshot AI, and Minimax of orchestrating “industrial-scale” distillation attacks on its AI models. The maker of Claude said in a post on X that the Chinese labs created more than 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, effectively extracting its capabilities to improve their own models.

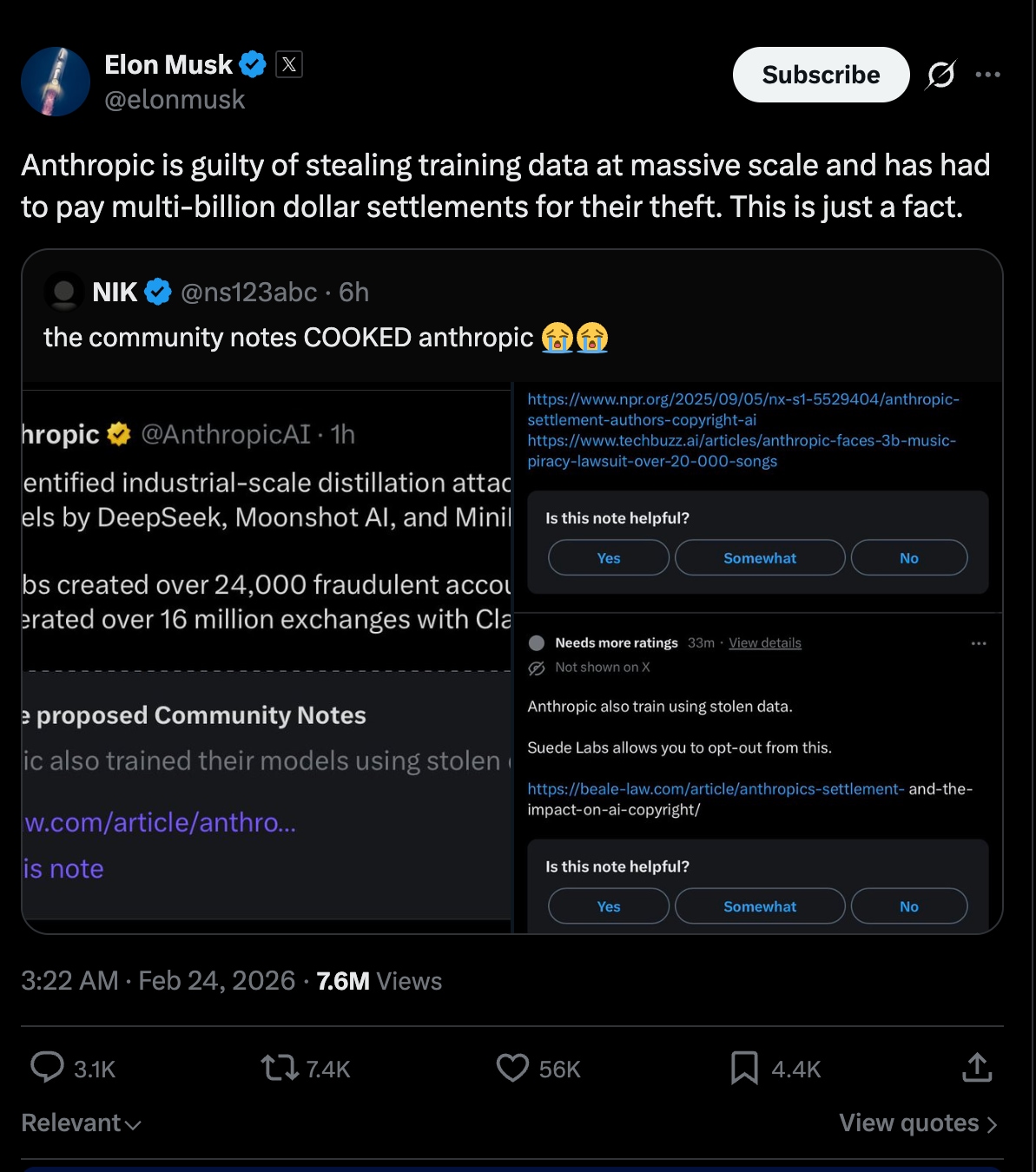

However, Elon Musk soon weighed in, claiming that the US startup itself is “guilty” over past training data practices, escalating a broader dispute over AI copying and data ethics.

Anthropic, in a post on its official blog on Monday, February 23, said that the three AI laboratories had illicitly extracted Claude’s capabilities to enhance their own models. “These labs generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in violation of our terms of service and regional access restrictions,” the company said.

We’ve identified industrial-scale distillation attacks on our models by DeepSeek, Moonshot AI, and MiniMax.

These labs created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models.

— Anthropic (@AnthropicAI) February 23, 2026

According to the company, the labs used a technique called distillation, meaning training less capable or smaller models on the outputs of a stronger one. This technique is widely used and is a legitimate way to train AI models, and frontier AI labs occasionally distill their own models to create smaller, cheaper versions for their customers.

However, the company warned that this method can also be used for ‘illicit purposes’. For instance, competitors can use it to extract advanced capabilities from other labs in a ‘fraction of time, at a fraction of the cost’ than it would take to develop them independently.

Why does this matter?

The company said that such methods are growing in intensity and sophistication and that the window to counter them is narrowing. The threat of distillation attacks, Anthropic said, has been growing beyond a single company and region. To address the issue, the company calls for rapid, coordinated action among industry players, policymakers, and the global AI community.

The company’s blog went on to describe ‘illicitly distilled models’ as posing significant national security concerns. According to the company, US companies and they build systems that prevent state and non-state actors from using AI for a range of malicious cyber activities; however, the illegally distilled models are unlikely to retain these safeguards. This means that these distilled models and their dangerous capabilities will further proliferate without any protections.

Anthropic said that foreign labs distil American models and then feed the unprotected capabilities into military, intelligence, and surveillance systems, allowing authoritarian governments to deploy frontier AI for a variety of offensive operations.

Story continues below this ad

It needs to be noted that Anthropic does not offer commercial access to Claude in China, so all the mentioned labs circumvented it. They essentially used what Anthropic describes as Hydra Cluster architectures, or networks of fraudulent accounts spread across Anthropic’s API and third-party cloud platforms. The Frontier AI lab makes two arguments on why this is a bigger problem beyond mere IP theft, the first about safety and the second about export controls, as the US restricts chip exports to China, reportedly to slow down Frontier AI development. The US-based AI company essentially argues that these kinds of attacks strengthen the case for chip controls, as running distillation at this scale still requires significant computation.

The pushback

Meanwhile, Elon Musk took to his X profile to claim that the Dario Amodei-led AI lab is guilty of stealing training data at massive scale. “Anthropic is guilty of stealing training data at a massive scale and has had to pay multi-billion-dollar settlements for their theft. This is just a fact,” he wrote.

A screegrab of Elon Musk’s post on X slamming Anthropic’s report.

A screegrab of Elon Musk’s post on X slamming Anthropic’s report.

Anthropic has levelled some serious accusations, considering that its own models have been trained on pirated and stolen data. Last year, in December, the Frontier lab headed by scientist Dario Amodei settled with authors a first-of-its-kind AI copyright infringement lawsuit, where the company agreed to pay $1.5 billion. The Claude-maker had reportedly stolen about 500,000 books to train their AI on it without compensating the authors or creators.

Every major AI lab has trained their models on vast amounts of internet data without explicit permission from the creators. This makes Anthropic’s argument somewhat shallow. AI labs have largely won the argument that scraping public data for training is legal or, at minimum, tolerable.